Prasanth Sasikumar

Research Fellow

Prasanth joined the Augmented Human Lab at the National University of Singapore (NUS) as a Research Fellow. With a strong focus on augmented reality (AR) and virtual reality (VR), Prasanth's research interests revolve around exploring the potential of these immersive technologies. His expertise lies in leveraging multimodal input and remote collaboration to enhance user experiences in AR and VR environments. Prasanth completed his PhD, which investigated the utilization of multimodal input for remote collaboration using AR and VR. Prior to that, he earned a master's degree in Human-Computer Interaction from HIT Lab NZ, where he studied the use of haptics in immersive environments.

-

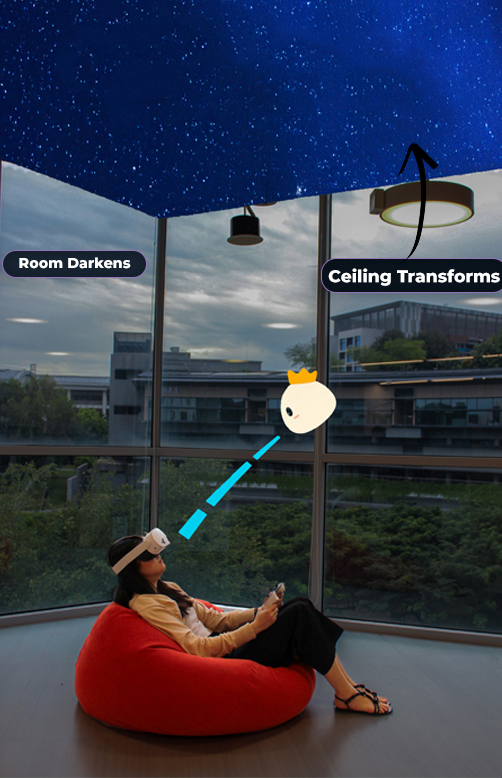

Interrupting Autopilot: Evaluating Sensory Interventions for Momentary Self-Regulation

Dissanayake, D., Mysan, M., Luo, Y., Antoni, T., Cleveland, P., Sasikumar, P., Nanayakkara, S. C., 2026. Interrupting Autopilot: Evaluating Sensory Interventions for Momentary Self-Regulation. In Extended Abstracts of the 2026 CHI Conference on Human Factors in Computing Systems (CHI EA ’26)

-

VRSense: An Explainable System to Help Mitigate Cybersickness in VR Games

Dissanayake, D., Gupta, C., Sasikumar, P. and Nanayakkara, S.C., 2025, April. VRSense: An Explainable System to Help Mitigate Cybersickness in VR Games. In Proceedings of the Extended Abstracts of the CHI Conference on Human Factors in Computing Systems (pp. 1-7).

-

VR.net: A Real-world Dataset for Virtual Reality Motion Sickness Research

Wen, E., Gupta, C., Sasikumar, P., Billinghurst, M., Wilmott, J., Skow, E., Dey, A., Nanayakkara, S.C. VR.net: A Real-world Dataset for Virtual Reality Motion Sickness Research. The 31st IEEE Conference on Virtual Reality and 3D User Interfaces, 2024. Best Paper Award!

-

SeEar: Tailoring Real-time AR Caption Interfaces for Deaf and Hard-of-Hearing (DHH) Students in Specialized Educational Settings

Samaradivakara, Y., Ushan, T., Pathirage, A., Sasikumar, P., Karunanayaka, K., Keppitiyagama, C., Nanayakkara, S.C, 2024. SeEar: Tailoring Real-time AR Caption Interfaces for Deaf and Hard-of-Hearing (DHH) Students in Specialized Educational Settings. In Extended Abstracts of the CHI Conference on Human Factors in Computing Systems (CHI EA ’24), May 11–16, 2024, Honolulu, HI, USA.

-

Exploring an Extended Reality Floatation Tank Experience to Reduce the Fear of Being in Water

Montoya, M.F., Qiao, H., Sasikumar, P., Elvitigala, D.S., Pell, S.J., Nanayakkara, S.C, Mueller, F. ‘Floyd’, 2024. Exploring an Extended Reality Floatation Tank Experience to Reduce the Fear of Being in Water. In Proceedings of the CHI Conference on Human Factors in Computing Systems (CHI ’24), May 11–16, 2024, Honolulu, HI, USA.

-

A User Study on Sharing Physiological Cues in VR Assembly Tasks

Sasikumar, P., Hajika, R., Gupta, K., Selvan, T., Pai, Y.S., Bai, H., Nanayakkara, S.C., and Billinghurst, M. 2024. Title of the Article. In IEEE Conference on Virtual Reality and 3D User Interfaces, 2024.

-

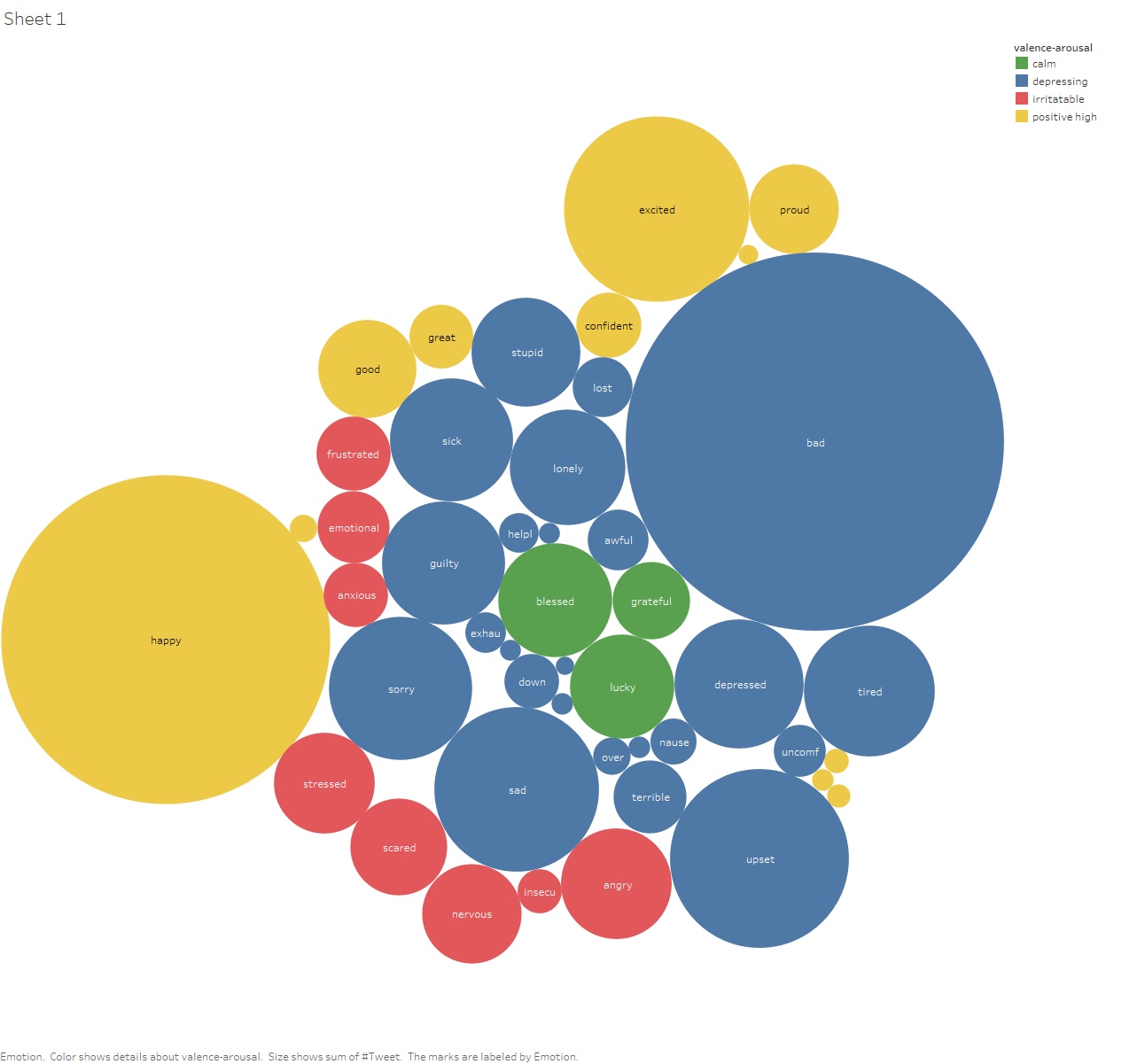

EMO-KNOW: A Large Scale Dataset on Emotion-Cause

Nguyen, M., Samaradivakara, Y., Sasikumar, P., Gupta, C. and Nanayakkara, S.C., 2023, December. EMO-KNOW: A Large Scale Dataset on Emotion-Cause. In Findings of the Association for Computational Linguistics: EMNLP 2023 (pp. 11043-11051).